TL;DR

- The “platform era” of rigid, six-figure security contracts is colliding with budget reality and faster-moving threats.

- 2026 predictions point to a massive operational shift: the end of “shelf-ware” (53% of licenses unused) and the collapse of “checkbox compliance.”

- CFOs are killing bloated stacks, “vibe coding” creates shadow risks no one can see, and governance must turn into a living workflow.

- The future belongs to flexible, just-in-time security that adapts as fast as the threats.

You bought the platform hoping it would cover everything. Eighteen months later, you’re still waiting for that feature update while your developers ship AI-generated code faster than your security tools can detect it. In 2025, the speed of AI development has outpaced vendor roadmaps while CFOs are targeting the waste in your security stack and regulators want operational proof instead of policy documents.

The 2026 predictions below came from conversations across our team at Sola, and feedback we’ve been getting from users, colleagues, and members of the cybersecurity community. Each person calls out the shift they see coming based on what security teams are dealing with right now.

Six cybersecurity predictions for 2026, six different perspectives on why the operating model is changing from buying rigid platforms to building flexible workflows that adapt instantly.

CFOs will kill half your security stack, and they’re right.

CISOs are sitting on millions of dollars in unused licenses. The dirty secret of enterprise security is shelf-ware, those tools you bought two years ago that now collect dust in your tech stack. Industry benchmarks show that roughly half of all SaaS licenses go unused, costing the average organization over $135,000 annually in completely dead spend. For larger enterprises, that number jumps into the millions.

Your CFO already knows about the problem. Finance teams report that 44% of organizations now face direct pressure to cut SaaS spending. The bloated stack era is ending because renewal committees won’t tolerate carrying that much waste anymore.

2026 marks the unbundling. Teams will stop shopping for rigid platforms that promise everything and start questioning whether their stack would even survive a realistic AI for SaaS security assessment. The pressure comes from finance, but the operational reality drives it: carrying 50+ disconnected tools creates integration gaps and the kind of alert fatigue that sends your best people to burnout.

Those signals show up as alerts: “suspicious login,” “unusual data transfer,” “new admin role assigned,” and so on. Most of them are technically “correct”, as they match a rule, but that doesn’t mean they matter.

The shift moves toward just-in-time security, where you spin up exactly the visibility or control you need when you need it. Flexible beats comprehensive when your budget can’t support both.

Start cutting waste with our SaaS checklist.

Solo (well…😉) security teams finally get their equalizer

The skills gap in the industry isn’t closing, and small teams are outnumbered. Security programs at most companies are often run by overstretched teams, trying to cover cloud security, identity management, compliance frameworks, incident response and more. The traditional answer was to hire more people or outsource critical functions. Hiring takes budget most teams don’t have. Outsourcing sacrifices business knowledge and accountability.

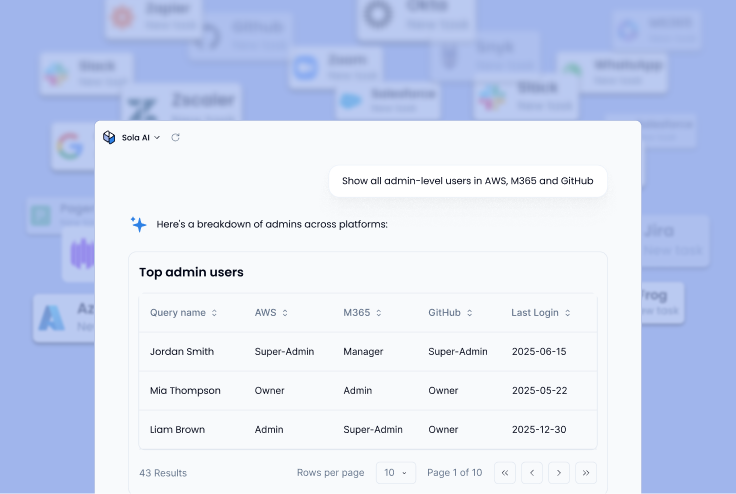

AI is the equalizer. The adoption of AI assistants in cybersecurity is already proving that small teams no longer need armies to operate credibly. Natural language interfaces let you query your security posture without writing custom scripts. No-code builders turn questions into dashboards, alerts, and agentic workflows in minutes instead of weeks, if not months.

It means that the poverty line for security drops dramatically. Small teams stop outsourcing their thinking and start owning their posture directly. A single CISO can now get instant answers across cloud infrastructure, SaaS applications, and identity providers, without waiting for an engineer to write queries or configure integrations.

The shift isn’t about replacing security expertise. AI handles complex analysis and data wrangling that used to consume 80% of a small team’s time, freeing them to focus on actual decision-making and strategy.

Checkbox compliance dies in 2026

We’re living in the AI governance paradox. Recent research found that 90% of organizations claim to have an AI information management framework, but only 30% say it actually classifies and protects data effectively. The gap between confidence and control has never been wider, and 2026 is when that illusion breaks.

The era of trust without verification is ending. Regulators like the EU AI Act and DORA demand operational proof, not policy documents sitting in SharePoint. When auditors ask to see your AI governance in action, they want logs, access controls, and evidence that your policies actually run. A beautifully formatted PDF explaining your AI principles won’t pass the test.

2026 kills checkbox compliance. Governance shifts from an annual document review to a living workflow that verifies controls continuously. Your AI policy needs to become executable code that runs automated checks, flags violations in real time, and produces audit trails without someone manually compiling spreadsheets.

Security teams that treat governance as a living system will stay compliant. Teams still relying on static policies will discover the hard way that regulators no longer accept good intentions as evidence.

AI agents will replace point-solution assembly

Security teams juggle dozens of specialized tools, each with its own dashboard and data model. Every new threat or compliance requirement adds another platform to the stack. The challenge isn’t lack of visibility, it’s that visibility gets fragmented across too many places to be actionable.

2026 marks the shift from tool-based security to intelligence-based security. Instead of assembling products and stitching integrations, the future stack is built on three principles:

- A unified cloud-native data platform that ingests once and enriches continuously

- Specialized AI agents that reason over shared context rather than isolated silos

- Composable outcomes, where teams consume personalized insights in minutes by assembling reusable building blocks.

Traditional SOC analytics is built around one question: “What just happened, and how bad is it?”. Shift-left security analytics flips that to: “Given our current identities, permissions, systems, and data, what are the most dangerous things that could happen?”.

In this model, security becomes an ongoing interaction between AI-driven reasoning systems and the security practitioners guiding them. Rather than navigating separate CSPM, identity, and endpoint tools, security teams will work with lightweight agents that already understand their risk landscape, as they share a common data foundation.

Point solutions emerged because technical limits forced us to slice risk into manageable pieces. Those limits no longer exist. The security stack of the future won’t be assembled, it will adapt and evolve around the organization it protects. Teams that embrace agent orchestration will build context-aware security in days. Teams still assembling point solutions will spend months on integration projects that never finish.

Security analytics move to real-time reasoning

Flexible security is impossible if your data is trapped in a slow, expensive SIEM. Traditional security analytics work like this: logs pile up in storage, then you write queries to search through them later. By the time you get an answer, the context is stale and the window to act has closed.

The shift-left model for security analytics moves the logic from the query phase to the ingest phase. Instead of storing raw logs and searching them later, the system normalizes and reasons over data as it enters. The difference matters because analyzing data at ingestion time turns logging into live reasoning.

When your security data gets processed and structured immediately, natural language questions yield instant answers. You can ask “which S3 buckets allow public access” and get a table in seconds instead of waiting for a query to crawl through terabytes of logs. The speed enables just-in-time security workflows where you build the exact visibility you need when a threat emerges, not three days later.

Teams that shift analytics left in 2026 can operate flexibly. Teams still dependent on traditional query-everything-later systems will keep missing the window where fast action actually matters.

AI-generated code will be your toughest blind spot

Developers are shipping code at warp speed, and security has no idea what’s in it. AI coding assistants like Cursor and Claude Code let developers describe functionality in plain language and ship entire applications without ever reviewing the underlying logic. Research shows nearly half of AI-generated code contains security flaws despite appearing production-ready.

You can’t block vibe coding any more than you could block developers from using Stack Overflow, especially once vibe coding security vulnerabilities start showing up in production.

The tools are too accessible, the productivity gains too obvious, and your developers already adopted them last quarter whether you approved the purchase or not. Shadow AI now represents 48% of enterprise applications.

2026 demands shadow AI governance. The prediction is straightforward: vibe coding becomes the number one source of untracked vulnerabilities because security teams can’t see what AI generated versus what humans wrote. Traditional code scanning tools weren’t designed to catch hallucinated service accounts, ghost permissions, or the specific patterns that emerge when AI stitches together code from its training data.

Security teams need to detect when AI tools generate code and scan the output immediately for AI-specific risks. The alternative is discovering vulnerabilities in the CI/CD pipeline – or worse, in production – after attackers already exploited them.

Key takeaways: how to build for 2026 now

- The operating model is changing. Rigid platforms give way to flexible workflows, and small teams gain leverage through AI tools that compress enterprise-level work.

- The shift starts with different questions. Instead of “which vendor covers everything,” ask “what do I need to see right now, and can I build it in minutes?”

- The teams that adapt fastest won’t be the ones with the biggest budgets. They’ll be the ones who stop carrying waste, embrace transparent automation, and use AI to multiply their impact.

- The question is whether you’ll lead that change or get forced into it when the renewal committee cuts half your stack.

Your #1 New Year’s resolution?

Getting started with Sola.

Top FAQs for 2026

VP Marketing, Sola Security

A creative strategist with a sharp eye for detail, Minnie has led product marketing and content for industry-defining companies like AppsFlyer and Payoneer. Once wrote a LinkedIn post that racked up a gazillion views and was featured by BuzzFeed and the Daily Mail.